Powering AI Responsibly: Reflections from the London Tech Show

Georgia Moore, Senior Energy Consultant at Twin and Earth, attended the London Tech Show to explore the future of AI infrastructure and sustainable data centres.

The London Tech Show brought together many of the leading voices in data centre design, energy infrastructure, and sustainability. The event showcased remarkable innovation, but it also highlighted the scale of the challenges accompanying the rapid expansion of AI infrastructure.

The Scale of What’s Coming

The industry is entering a period of extraordinary growth. By 2030, the world is expected to build around 240 GW of new data centre capacity, roughly doubling global capacity compared with the end of last year. This expansion is being driven primarily by demand for AI computing.

Dense GPU clusters and large-scale AI training workloads are pushing facilities into territory they were never designed for. Rack densities approaching one megawatt per rack are now being discussed, requiring new electrical architectures, high‑voltage DC distribution, advanced liquid cooling, and modular construction methods capable of keeping pace with rapidly evolving GPU technology.

One of the central themes running through the show was not just how to build new infrastructure, but how to ensure it remains relevant as technology continues to evolve. The data centres of 2027 may look very different from those being designed today.

The Energy Challenge

All of this computing power demands enormous amounts of electricity. In the UK, data centres already account for around 2% of national electricity consumption, a figure set to rise significantly as AI infrastructure expands.

Yet access to power is rapidly becoming one of the biggest constraints on new developments. While computing capacity continues to scale quickly, grid infrastructure and connection timelines are struggling to keep pace. Several speakers at the London Tech Show identified electricity availability as one of the most critical limiting factors for the next generation of AI facilities.

This is already shaping development decisions. Industry experts warn that delays in securing grid connections are pushing some projects towards temporary on-site gas generation simply to get facilities operational. While this may provide short-term certainty, it sits in clear tension with the sector’s Net Zero ambitions.

At the same time, the industry is increasingly exploring ways for data centres to integrate more closely with energy systems, supporting renewable power projects, incorporating storage, and working with communities to ensure new infrastructure delivers broader local benefits.

Energy for AI, AI for Energy

Alongside discussions about power constraints, a broader narrative emerged around the relationship between “Energy for AI” and “AI for Energy”.

On one hand, the rapid expansion of AI infrastructure is driving a significant increase in electricity demand, placing growing pressure on electricity networks and energy infrastructure. On the other hand, many industry leaders argue that AI could also become a powerful tool for improving the energy system itself. Advanced analytics and machine learning are increasingly being used to optimise grid operations, forecast demand, and support the integration of renewable generation. Within data centres themselves, AI-enabled modelling and simulation tools are helping engineers design and innovate more efficiently.

Yet this framing can obscure the scale of the immediate challenge. The environmental pressures associated with AI infrastructure remain significant, and highlighting AI’s potential to optimise energy systems in the future does not diminish the strain it is placing on them today.

The Digital Twin Opportunity

One of the most compelling sessions at the show came from Siemens, focusing on the growing role of digital twin technology in data centre development.

A simulation-first design approach allows engineers to model power systems, cooling strategies, and operational performance long before a facility is built. For high-density AI environments, this ability to test scenarios and optimise design choices virtually is becoming increasingly valuable.

AI is also becoming embedded within these modelling tools themselves. Generative AI can automate repetitive design tasks, AI co-pilots can support complex engineering decisions, and agentic AI systems may eventually help manage operational environments in real time.

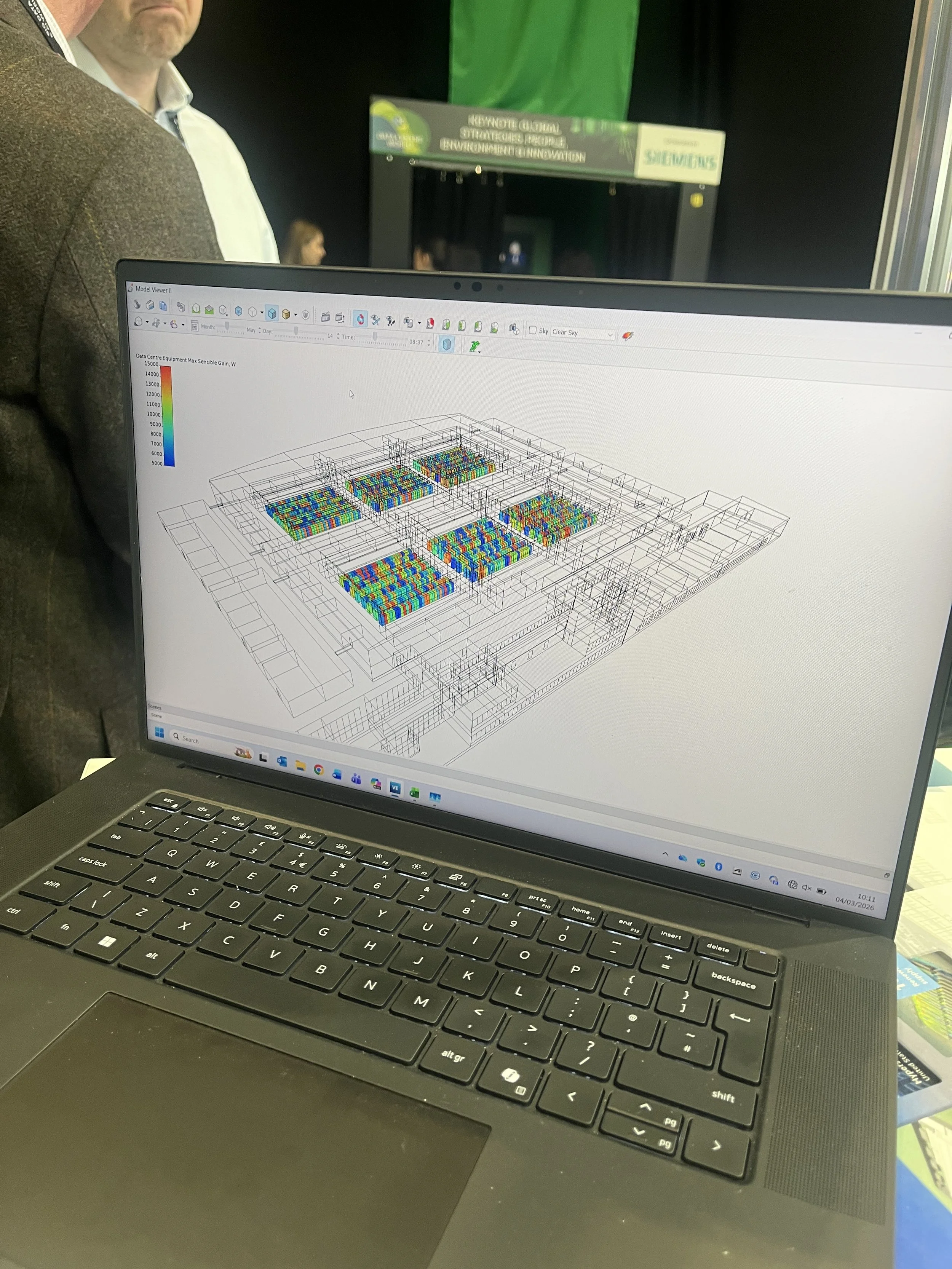

We also spoke with IES VE, who demonstrated how detailed energy modelling can quantify consumption across an entire data centre environment. Walking us through a live model, they showed how Power Usage Effectiveness (PUE), liquid cooling loads, HVAC interventions, and free cooling opportunities can be accurately simulated and interpreted within the virtual environment.

From an energy consultancy perspective, these tools reflect what we do at Twin & Earth every day. Early-stage modelling enables us to stress-test energy assumptions, evaluate cooling strategies, and identify opportunities to reduce environmental impact before construction begins.

Where Does This Leave Us?

The level of innovation on display at the London Tech Show was encouraging. Liquid cooling technologies are maturing rapidly, digital twins are improving design intelligence, and modular construction methods are accelerating deployment timelines.

Perhaps most importantly, the industry conversation is beginning to shift. Data centres are increasingly being viewed not just as energy consumers, but as potential partners within the wider energy system through renewable power procurement, grid flexibility, and waste heat recovery. As AI infrastructure continues to expand, the key question is not whether the growth will happen, but how responsibly it can be delivered.

At Twin & Earth, we work with clients to model, measure, and reduce energy consumption in data centre facilities, helping ensure that the infrastructure powering the digital economy is designed to perform efficiently and sustainably over the long term.